Portfolio

These are a few examples of my coding and other technical work. Most of these examples are from projects in the past couple of years, since most of my work before then was closed-source development for Northrop Grumman and Google. The ordering is roughly reverse chronological order, though some more prominent projects are bumped up a little.

Many of the works listed below also appear on my Open Source profile pages:

You may also be interested in my publications outside of this blog, my presentations, and press articles about me.

At the organizers' request, I assembled this workshop for the BazelCon

Training Day on 2025-11-09. The example project was heavily based on my work

Bzlmodifying

rules_scala. The example project, its commit history, and

its corresponding slides contain tons of (hopefully) helpful references.

See also: The Bzlmod Migration Bootcamp and the NoVA Live Music Mingle Fundraiser

I've volunteered to update the rules_scala

project, which enables the Bazel build

tool to build Scala programming

language projects, to work with

Bazel

modules.

Bazel modules, a.k.a. Bzlmod, are a new way of integrating external

dependency packages, or "repositories", into a Bazel project.

Bzlmod replaces the legacy

WORKSPACE mechanism, which is slated for removal from Bazel

9.0.0 in 2025. Mainline is

7.4.1

as of 2024-11-14, and the 8.0.0 release is imminent.

My goal is to help unblock EngFlow customers and prospects, as well as other Bazel community members, from migrating their Scala projects to Bzlmod. This, in turn, will help them stay current with newer Bazel releases and Bazel rule sets for other languages and infrastructure. I've also been learning a lot about Bazel and enjoying the process of Open Source collaboration a great deal.

Rollup plugin to precompile Handlebars templates into JavaScript modules.

Enables an application's tests to open its own page URLs both in the browser and in Node.js using jsdom. Provides limited, though still very useful support for opening pages that load external JavaScript modules when using jsdom.

This project incorporates the String Calculator kata to demonstrate Test-Driven Development in the context of a Test Pyramid-based testing strategy. Accompanies the Test Pyramid in Action training presentation.

Mailing list system providing address validation and unsubscribe URIs. Implemented in Go using a number of Amazon Web Services.

- Lambda

- API Gateway

- DynamoDB

- Simple Email Service

- Simple Notification Service

- Web Application Firewall

Uses CloudFormation and the AWS Serverless Application Model (SAM) for deploying the Lambda function, binding to the API Gateway, managing permissions, and other configuration parameters.

Originally implemented to support the email subscription form on this site.

See Please unsubscribe if unexpected for more background, as well as other EListMan tagged posts.

Amazon Simple Email Service Receipt Rule email forwarding system. Implemented in Go using Amazon Web Services Lambda and AWS Simple Email Service.

Uses CloudFormation and the AWS Serverless Application Model (SAM) for deploying the Lambda function, managing permissions, and other configuration parameters.

A fork of the original Bats framework I and a few online collaborators made after years of inactivity from the original maintainer. Bats enables easy and robust automated testing of Bash scripts and other system programs.

I've contributed massive performance improvements, investigated and worked around a number of bugs in Bash itself, shepherded the first v1.0.0 release, and added new features. I've also developed Bash build scripts to help me build specific Bash versions on-demand to pinpoint and debug differences in Bash behavior between versions.

I had to resign my maintainership once joining Apple in November 2018.

An application for allowing authenticated users to create and dereference custom links hosted on their own domain. Decided to write my own when I couldn't find an existing open source solution that fit my needs.

It's also proven a convenient excuse to finally do some real frontend programming, which I haven't really done before. In the process, I began to grok how to do authentication with Passport, and learned how to test it effectively using supertest by capturing the authentication cookie.

In the process of trying to make the right thing easy for myself when it comes to browser testing, I've learned how to:

- use Vanilla JS in combination with handwritten HTML templates to develop the interface as a single page app, following the patterns in Serverless Single Page Apps (although the app isn't serverless, as serving redirects is still easiest with a server)

- create coverage reports using Istanbul, NYC, and PhantomJS

- generate live coverage reports when running Mocha tests directly in the browser thanks to istanbul-middleware and live-server

- run browser tests in parallel using Karma

- run end-to-end tests using selenium-webdriver

Also, in the process of trying to collect browser test coverage on Windows Subsystem for Linux using PhantomJS, I proved that a longstanding issue with PhantomJS was related to WSL's lack of support for nonblocking connect() breaking QT WebSocket support, in Microsoft/BashOnWindows#903. As a result, Microsoft has implemented this feature, which resolved the Karma incompatibility issue for which the bug was originally filed.

I've also been trying to help debug npm 5's package-lock.json issues in npm/npm#16389. Also see my more detailed analysis added later to npm/npm#16389.

This a framework I began developing in the summer of 2016 for writing modular, well-documented, and well-tested Bash script applications—in other words, "to make the right thing the easy thing," even for Bash scripts! It uses the Bash Automated Testing System (Bats) and contains an efficient and powerful library of Bats test assertions and other Bats helper functions.

The experience I've gained working with Bash and Bats as part of this project enabled me to improve Bats performance by more than 2x on UNIX, and by 10x-20x on Windows, as well as inspired me to submit my request to become a maintainer of Bats.

See also my five minute lightning talk introducing the framework at the Surge 2016 conference.

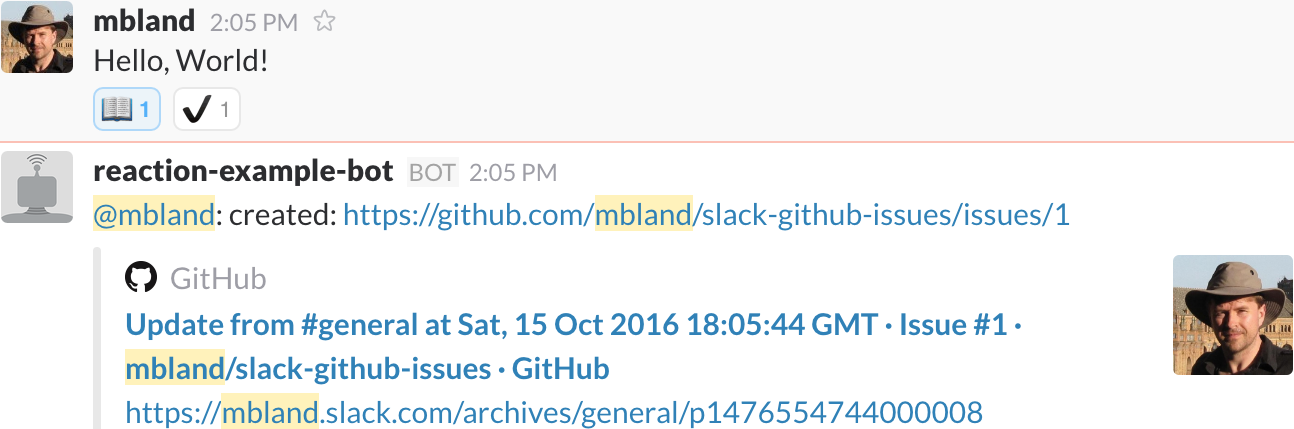

At the request of 18F Handbook designer and curator Andrew Maier, I wrote this plugin for team's server. Whenever a user flags important information shared in a Slack discussion with a specific emoji reaction, Hubot will file a GitHub issue with a pointer to the information. Afterwards, whoever is responsible for cultivating the Handbook can triage the issues and make sure important information is not lost to the sands of Slack history.

This plugin makes it easy for anyone, not just technical people, to make material contributions to the organizational knowledge base. Though originally developed for the 18F Handbook, it's configurable to support any Slack team or set of GitHub repositories. It was also a great example of writing a small, thoroughly-tested distributed system that made for a great instructional example, inspiring the Unit testing in Node.js tutorial, described below.

It also provided the opportunity to make an upstream contribution to

Slack's hubot-slack integration package (written in

CoffeeScript) to better support responding to reaction_added

events. This support first appeared in version

4.1.0 via pull request #360, and was

improved in version

4.2.0 via pull request #363. I also

created the hubot-slack-reaction-example program to illustrate

its basic usage.

Example of filing an issue by reacting to a message with a book emoji. After successfully filing an issue, the bot application using this package marked the message with a heavy_check_mark emoji and posted the issue URL to the channel.

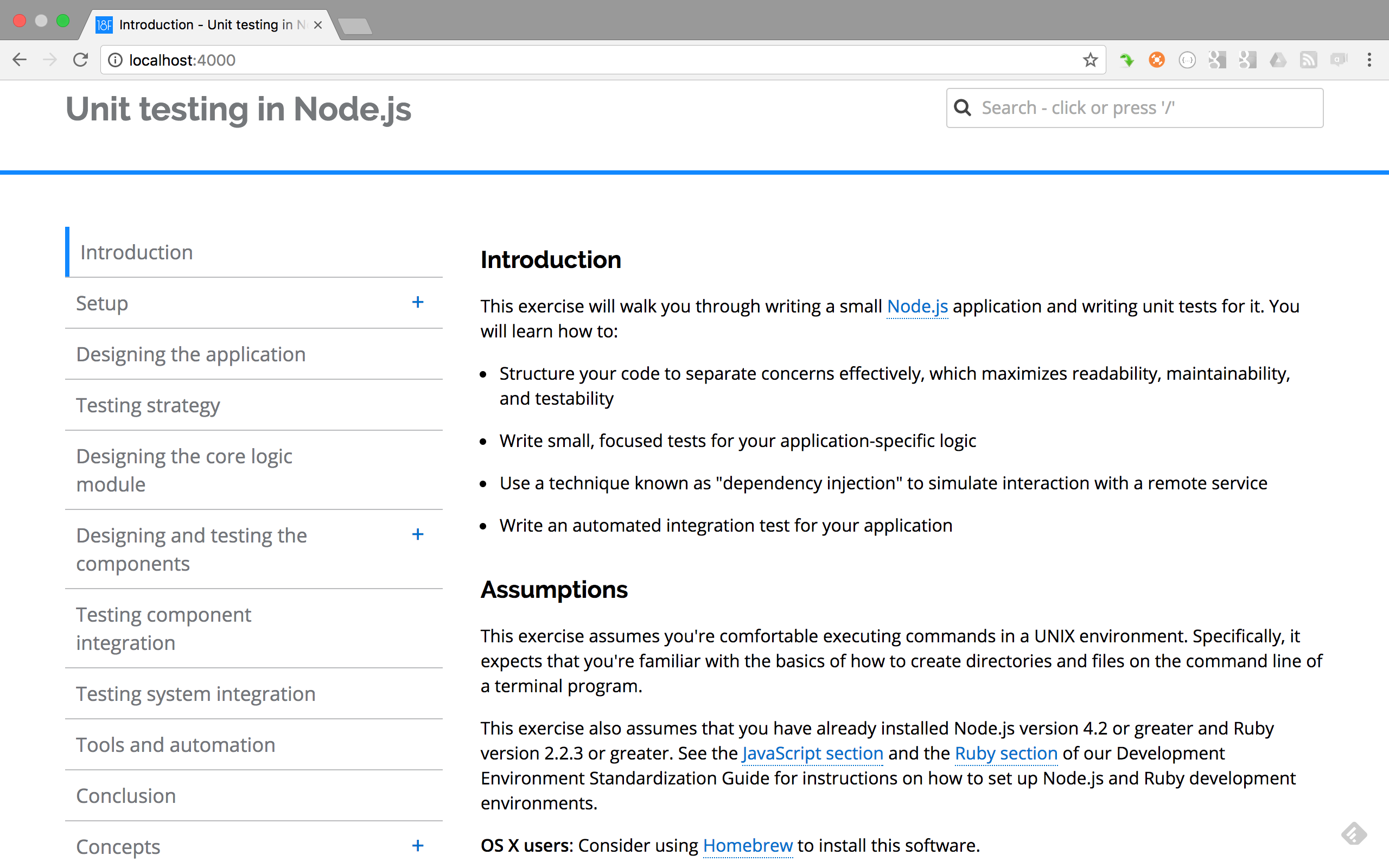

After completing the slack-github-issues Hubot plugin, I felt it made for a great, real-world example upon which to base a unit testing tutorial, since the tests comprised the gamut from small (unit) to medium (integration) to large (system).

The result was this tutorial, which builds up from testing straightforward (yet detailed) configuration logic, to external service API wrappers, to distributed event orchestration, to a small set of end-to-end tests. The instructional website is based on the 18F Guides Template described below.

I've only attempted to give this workshop once to date, to an audience of mostly Windows developers from the Tennessee Valley Authority in Chattanooga. The experience made it clear I have a lot to learn about designing and leading workshops, but the difficulty the developers had installing Ruby on Windows also led me to start my go-script-bash project shortly thereafter.

The front page of "Unit testing in Node.js" highlights the concepts covered in the tutorial, along with an outline reflected in the chapter table to the left.

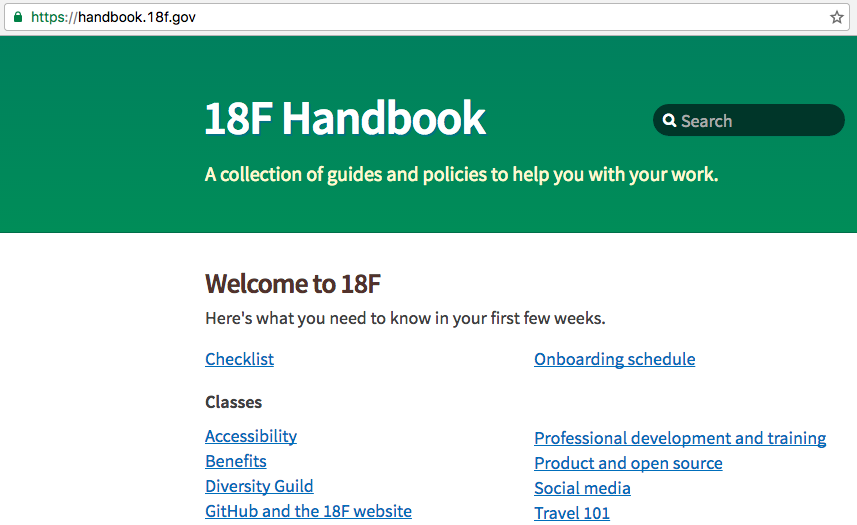

The 18F Handbook is the team's central repository of organizational knowledge. The descendant of my first 18F project, The Hub, it benefits from the expertise of actual frontend designers and developers and sports many of the Jekyll features I developed during the course of my work on The Hub and the 18F Guides. The cultivation of its material is greatly augmented by the slack-github-issues Hubot plugin that I developed specifically for the purpose.

Its primary researchers/designers/content architects were Andrew Maier and Melody Kramer, building on previous work led by Colin MacArthur and Ethan Heppner as part of the Documentation Working Group efforts to improve the 18F onboarding process.

The "18F Handbook" relies upon the same Jekyll advances as the 18F Guides to provide a rich, fast, safe experience from a static website. Note the jekyll_pages_api_search widget in the upper right corner. Other pages contain breadcrumbs reflecting the information hierarchy, thanks to some data manipulations via Jekyll plugin logic I added.

After my colleague Aidan Feldman integrated the lunr.js search

engine into The Hub, using a document corpus

generated by his jekyll_pages_api Jekyll plugin, I eventually

set about learning to improve its performance. Eventually I came up with the

jekyll_pages_api_search plugin, which produces the search

corpus as the site is generated on the server, rather than in the user's

browser, and provides Liquid and Sass directives to include a standard

interface widget.

To address Andrew Maier's accessibility concerns, I created a default search results page instead of relying on a drop-down list of results. As a life-long Vim user, I made sure the widget and results page were completely navigable by keyboard shortcuts. This plugin became a standard feature of both the 18F Guides style and the 18F Handbook.

Example of querying the search index and selecting from the search results page. The / key moves focus to the search query box, and the first result receives the tab index, making mouse-based navigation unnecessary.

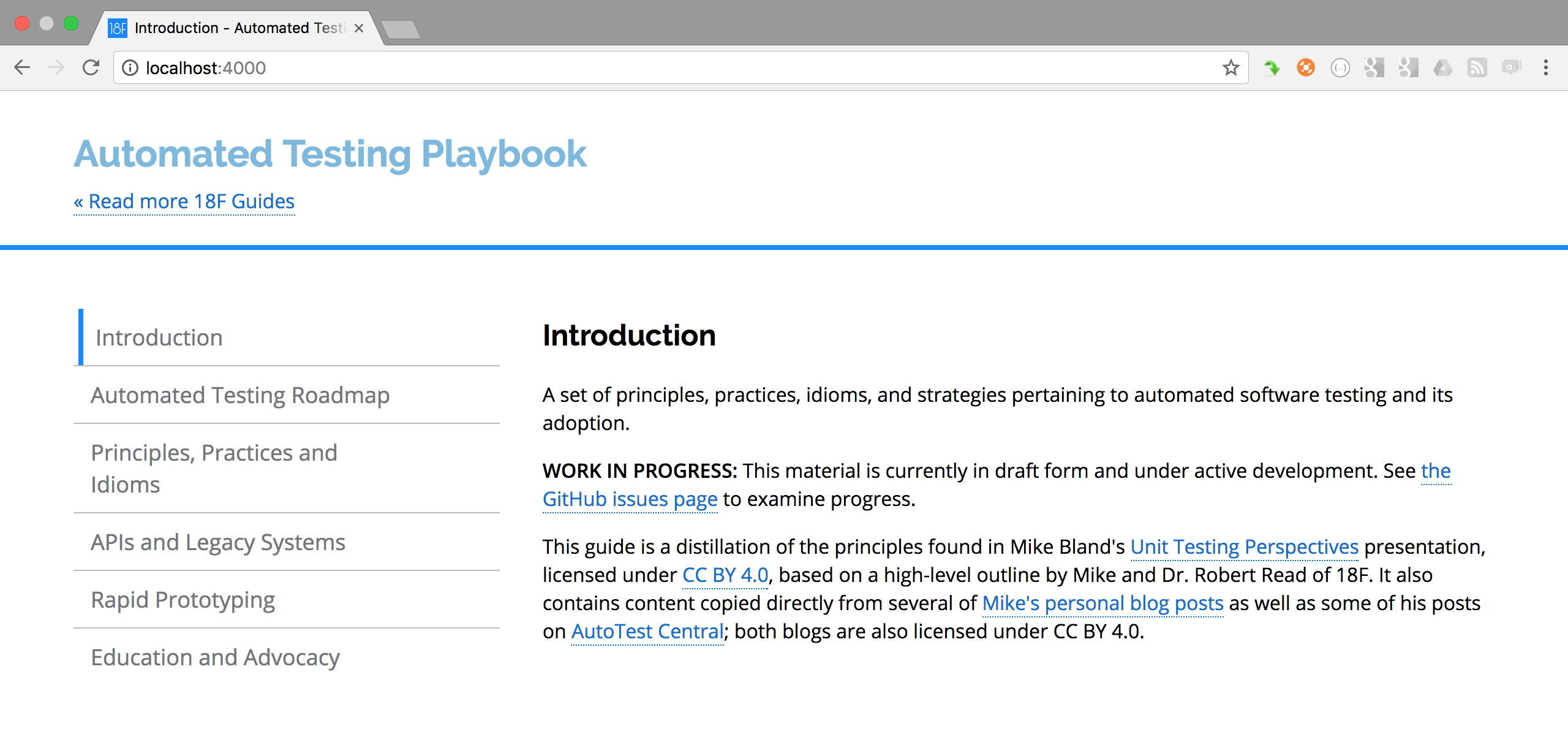

This documents a set of principles, practices, idioms, and strategies pertaining to automated software testing and its adoption.

This was the main artifact of the 18F Testing Grouplet, which I co-led with Alison Rowland, and also featured Josh Carp, Moncef Belyamani, and Shawn Allen.

The front page of the "Automated Testing Playbook" shows the chapters corresponding to the four broad categories of plays.

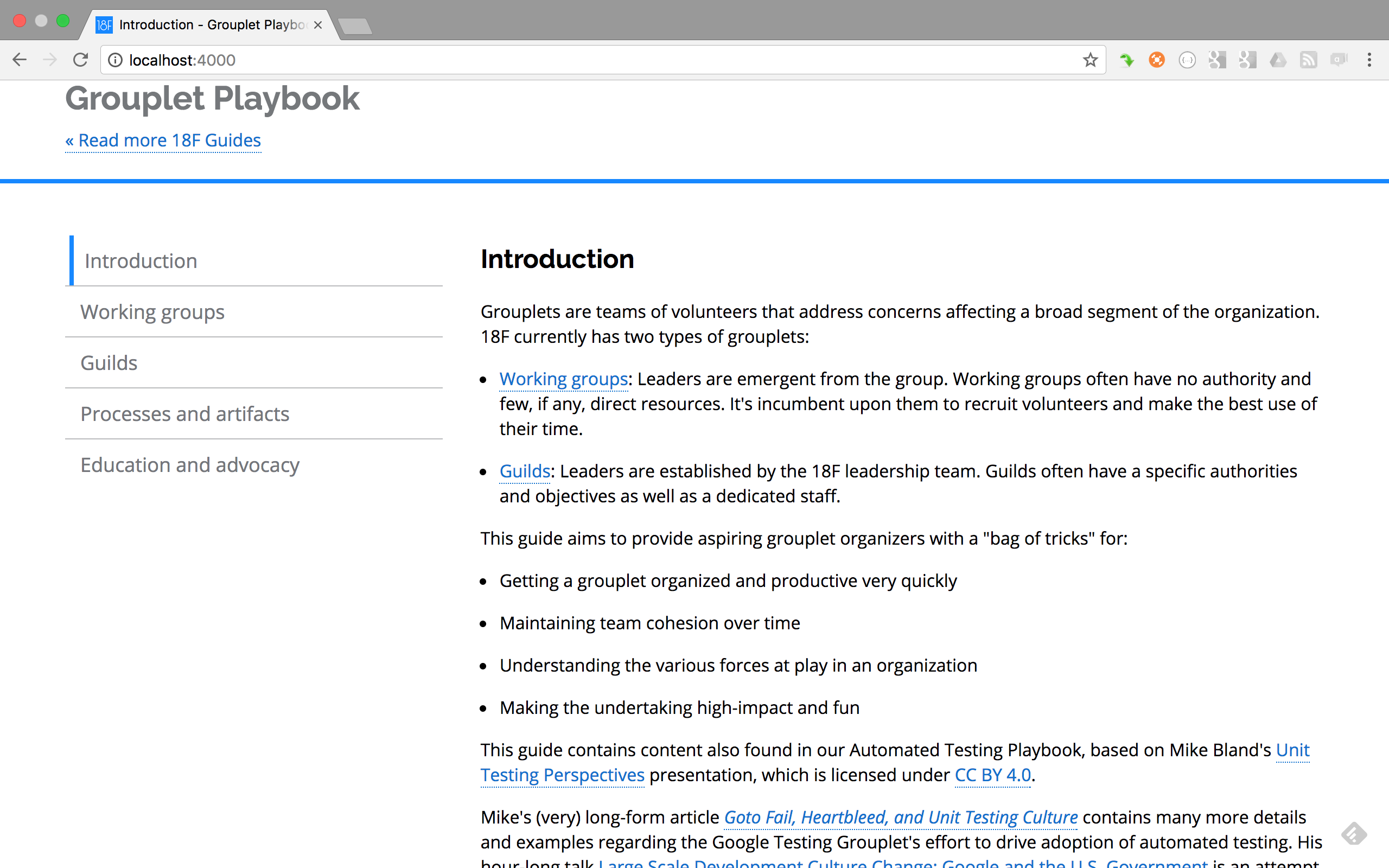

This is a guide to organizing grassroots initiatives for organization-wide improvement and enablement, a.k.a. "Grouplets".

This was the main artifact of the 18F Working Group Working Group, which I co-led with Gray Brooks, and also featured Nick Bristow and Nick Brethauer.

The front page of the "Grouplet Playbook" attempts to differentiate between "Working Groups" and "Guilds". The real substance is in the "Processes and artifacts" and "Education and advocacy" chapters.

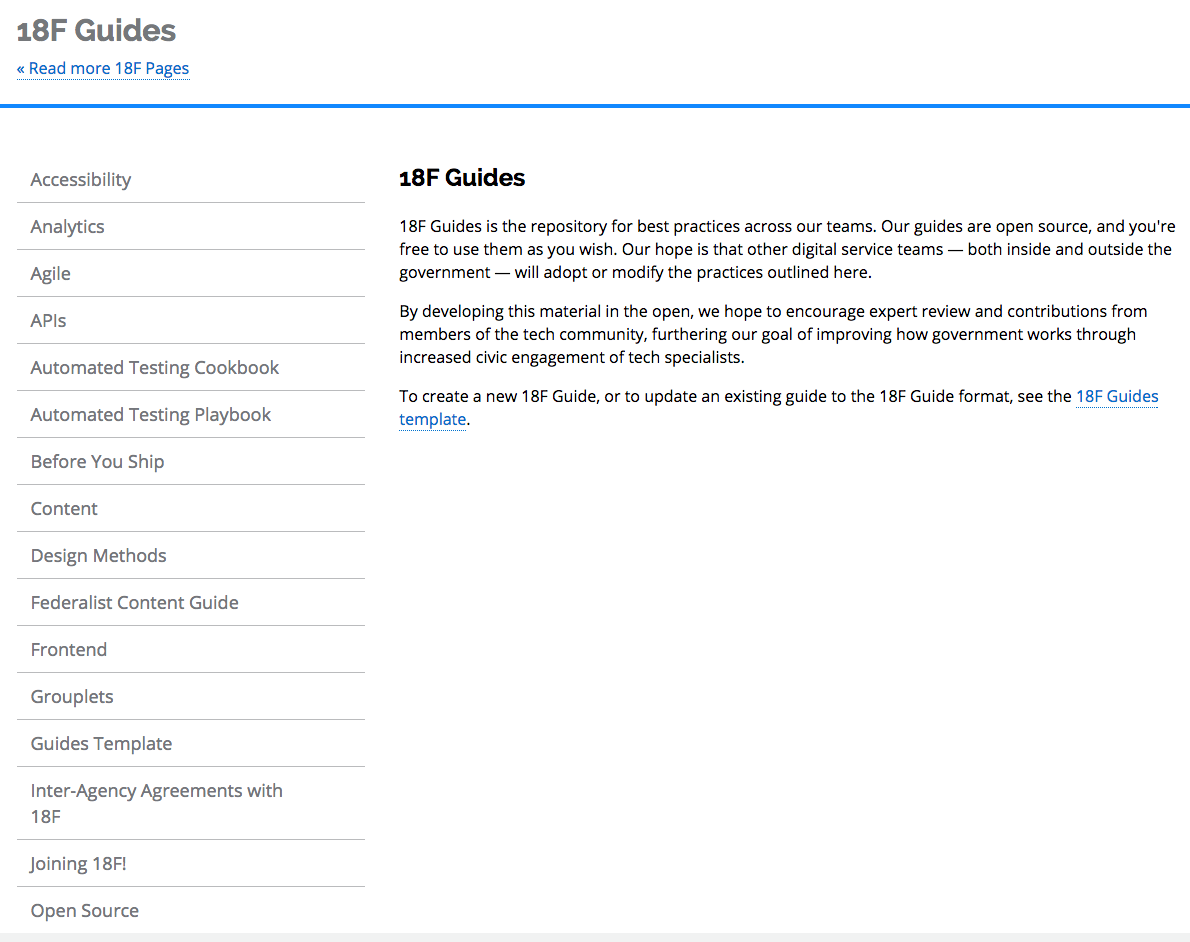

A core initiative of the Documentation Working Group that I led, the Guides are a series of documents curated by Grouplets and other groups to cultivate specialized domain knowledge and disseminate it to the rest of the organization. Most of the Guides were also available to the general public, as part of our mission to work in the open, share knowledge, and provide tools and practices for other agencies to adapt to their needs.

The Guides Template is both a template and a tutorial to writing a Guide that leads the reader through the entire process, from cloning the Guides Template repository, to replacing its content, to publishing a new Guide.

The Guides concept was so compelling and Guides Template product so effective that dozens of Guides proliferated thanks to word-of-mouth. The underlying publishing tools are Jekyll, a static site generator already in use by much of the team (and the Web); GitHub, which the team used exclusively for source control; 18F Pages, a very lightweight server inspired by GitHub Pages; and the Nginx web server.

A core part of the Guides Template is the guides_style_18f Jekyll plugin, described below.

See the image from jekyll_pages_api_search to see the Guides template and its search mechanism in action.

The 18F Guides home page shows a sample of the many guides that individual grouplets and other team members developed to cultivate and share their knowledge with the rest of the organization, and the world.

After being notified that our organization couldn't use GitHub Pages to publish canonical U.S. federal government information anymore, I wrote and launched the first version of this lightweight server that listens for GitHub webhooks and rebuilds Jekyll sites just over a day later.

Running on a single Amazon EC2 instance behind an Nginx web server, with no live database component, it's as secure as it is cheap as it is easy to use to publish content. It allowed for the proliferation of 18F Guides, was the original platform for the 18F Handbook, and was used to initial development and launch of the U.S. Web Design Standards (USWDS).

Alison Rowland helped me greatly by reviewing many

pages-server pull requests and introducing me to the Mocha

testing framework and Chai assertion library. Julia Elman from the USWDS

team provided tons of great feedback, bug reports, and feature requests.

Dave Zvenyach made me hip to JavaScript Promises to structure

the asynchronous logic, after I'd originally used a style resembling

Google's C++ ControlFlow class, only having just begun

writing JavaScript.

During the course of expanding the capabilities of the server, I also made a small contribution to the Jekyll project (PR #3823).

A core part of the Guides Template is the guides_style_18f

Jekyll plugin, which packages style, structure, and data management tools so

that users can focus exclusively on their content rather than data massaging

and presentation mechanisms.

The original style was taken from the 18F Agile Guide, which was built upon the DOCter template produced by the Consumer Financial Protection Bureau (CFPB). Nick Bristow did a lot to ensure the style met Section 508 accessibility standards.

See the image from jekyll_pages_api_search to see the plugin's style and its search mechanism in action.

I discovered this project, originally called google_auth_proxy,

while implementing authentication for the Hub. As a

static site generated using Jekyll, it contained no server-side component

to control authentication; but using the google_auth_proxy in

conjunction with the Nginx web server fit the bill.

Thanks to help from the maintainer, Jehiah Czebotar, I was able to

introduce a new Provider interface into the code so that I

could add support for 18F's (now defunct) MyUSA auth provider. Since then,

others have added support for GitHub, LinkedIn, and more, prompting Jehiah

to change the name to oauth2_proxy. I added a number of other

features as well, and even managed to test it on Plan 9.

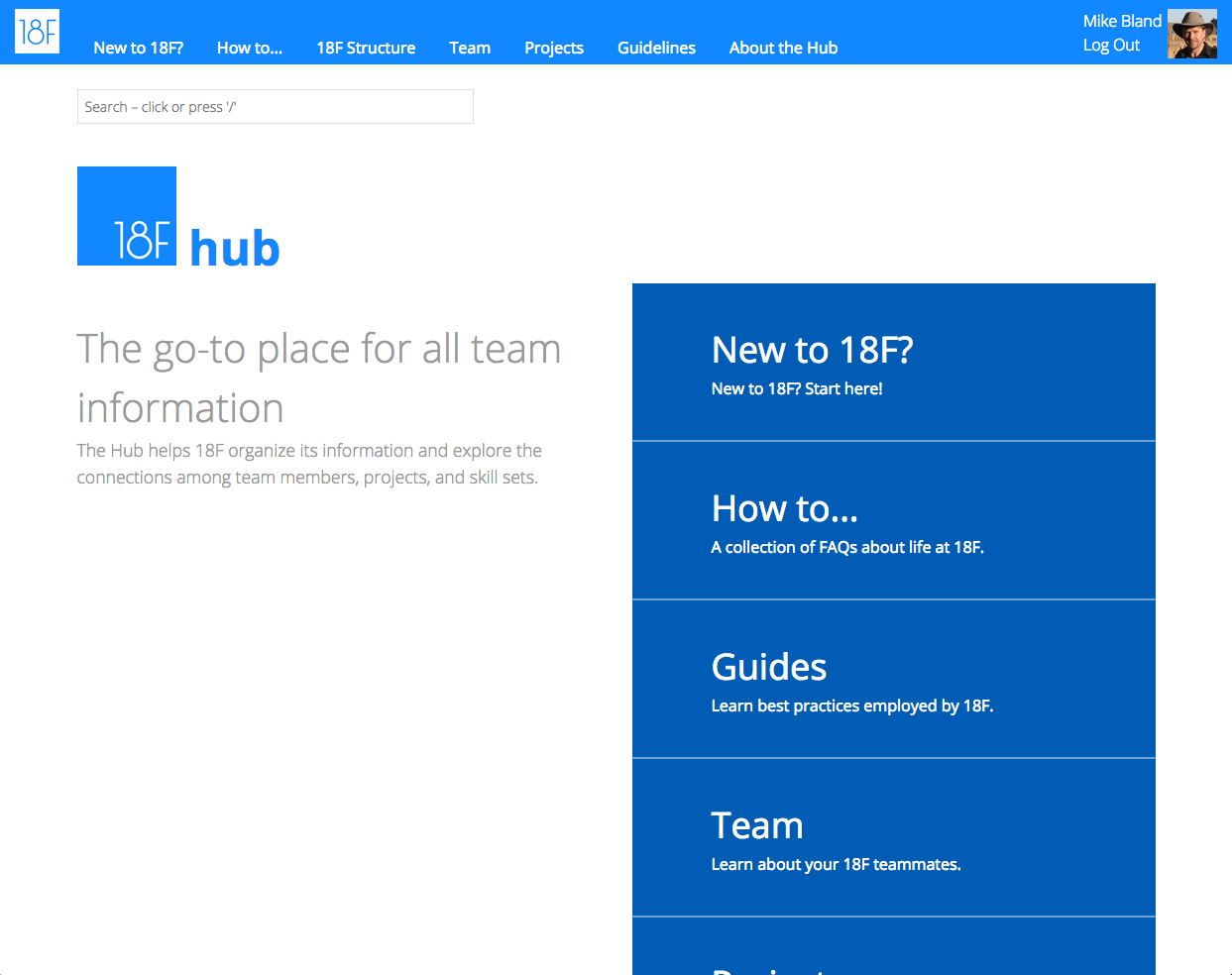

The predecessor of 18F Guides, the Team API, and the 18F Handbook, The Hub was intended to be a prototype to cultivate and disseminate important team-wide information, as well as show the connection between people, projects, and skill sets. It also tried to introduce the "snippets" practice to the team, though it didn't stick.

Aidan Feldman was my closest collaborator for most of the project's life. Michelle Hertzfeld took the first pass at the frontend design, and Jen Thibault took the second. The Hub was the first central artifact of the Documentation Working Group, prior to the introduction of 18F Pages/18F Guides.

The front page of The Hub, originally designed by Michelle Hertzfeld and updated by Jen Thibault, shows sections that eventually comprised the 18F Handbook, a link to the 18F Guides, the "Team" section that was built with what became the Team API logic, and the search widget added by Aidan Feldman I would repackage as the jekyll_pages_api_search plugin.

The Team API Ruby gem contains the team metadata graph-building logic extracted from the Hub.

The Team API server is a Node.js program that listens for GitHub webhooks indicating updates to .about.yml files, then processes the updates to generate a graph of people, projects, and skill sets exposed as a set of JSON endpoints. It was a little fragile, but worked as a proof-of-concept, and even supplied live updates to the 18F Dashboard for a while.

The .about.yml mechanism allows a project to publish and

maintain YAML metadata that can be easily maintained by project owners, that

is visible and accessible to interested parties, and that can be harvested

and processed by tools and automated systems.

The Team API was the original consumer of this information, which in turn provided data to The Hub and the 18F Dashboard. The hope was that by making it easy for projects and grouplets to maintain this data, it would be easy anyone inside or outside the organization to have visibility into it people, structure, projects, and activity, and how we worked.

Recently, the TODO Group, a consortium of tech companies helping develop

Open Source standards, expressed an interest in the .about.yml

format, inspiring my ".about.yml background" blog post.

.about.yml was spearheaded by both the 18F Testing Grouplet

(in pursuit of building a "code health" dashboard, never entirely

realized) and the Working Group Working Group (to facilitate transparency

and discovery of Grouplets). Alison Rowland got the code started and

did most of the heavy lifting in the early days.

This was the blueprint for the 18F Pages/18F Guides server. I'd originally attempted to use Docker for this, but at the last minute was instructed not to use it. Fortunately it was a relatively straightforward process to migrate the Dockerfiles to Bash scripts in short order.

I include this project for two reasons. One, it ties together many of the servers listed above, and then some. This was the beginning of the "tools" package I'd hoped to package with our practices (via the Guides) to promote knowledge sharing and enablement across federal agencies. Two, I haven't completely abandoned this project, and intend to revisit and productionize it one day, as I believe it could be useful not only to the federal government, but to many organizations that need a kick start to their knowledge sharing practices.

Signs and authenticates HTTP requests based on a shared-secret HMAC signature. Developed and tested in parallel across languages to ensure interoperatability.

A server that routes authenticated requests to multiple authentication servers based on the presence of specific headers or cookies in a request.

Proxy server that signs and authenticates HTTP requests using an HMAC signature; uses the hmacauth Go package.

Prototype/proof-of-concept of a Node.js server that responds to queries for Lunr.js indexes. The idea is that it can be used to consolidate search across a set of statically-generated 18F Pages/18F Guides.

I wrote heartbleed_test.c in response to those who claimed

that the OpenSSL "Heartbleed" bug was essentially untestable and

unavoidable. The build.sh script that accompanies it

automatically downloads the source for OpenSSL-1.0.1-beta1 (in which the

bug was introduced) and OpenSSL-1.0.1g (in which the bug was fixed),

patches the test into each copy of the source, builds each version and runs

the test in each. The fixed version produces no output, indicating success;

the "bleeding" version prints:

TestDtls1Heartbleed failed: expected payload len: 0 received: 1024 sent 26 characters "HEARTBLEED " received 1024 characters "HEARTBLEED \xde\xad\xbe\xef..." ** TestDtls1Heartbleed failed **

The structure of the test code follows what I call the "Pseudo x-Unit

Pattern", which I originally stumbled upon when writing the goto fail unit/regression test. This test was

eventually adapted to the OpenSSL coding style (instead of the

Google coding style used

in the original) and submitted as ssl/heartbeat_test.c.

I wrote several blog posts about Heartbleed, Testing on the Toilet Episode 330: "While My Heart Gently Bleeds", and the "Goto Fail, Heartbleed, and Unit Testing Culture" article for Martin Fowler.

For a brief time, I offered to help the OpenSSL team improve its automated testing, and they accepted. However, after joining the government in November 2014, I became consumed with my new job and never made the time to revisit my OpenSSL efforts. Since then, heartbeat support has been removed, along with the test. (See pull requests #1669 and #1928.)

Before the Heartbleed bug was discovered, I wrote

tls_digest_test.c and an accompanying patch for Apple's Secure

Transport library in response to those who claimed that the goto

fail bug was essentially untestable and unavoidable. The

Security-55471-bugfix-and-test.tar.gz bundle contains a

build.sh script that downloads the buggy version of the Secure

Transport code from Apple, applies the patch, builds just the affected code

and runs the test without requiring the full set of dependencies needed to

build the entire library. It requires OS X and Xcode. Changing the code to

reintroduce the extra goto fail statement into the handshake

algorithm triggers a test failure:

Executing Security-55471/libsecurity_ssl/build/libsecurity_ssl.build/Debug/libsecurity_ssl.build/Objects-normal/x86_64/tls_digest_test TestHandshakeFinalFailure failed: expected FINAL_FAILURE, received SUCCESS 1 test failed Test failed

This was the first test I wrote in which I stumbled upon what I call the

"Pseudo x-Unit Pattern", in which the code resembles a test using an

xUnit-based framework without actually using a framework at all. I also

wrote a guest blog post for the (now-defunct) AutoTest Central, a flurry of

other goto fail posts on this site, gave a lightning talk at an

Automated Testing Boston Meetup event, wrote Testing on the Toilet Episode

327: "Finding More Than One Worm in the Apple", and compiled the lightning

talk into the "Finding More Than One Worm in the Apple" article for ACM

Queue. The Queue editors then submitted the article for

publication in the July 2014 issue of Communications of the ACM,

the full text of which you can access via the "Finding More Than One Worm in

the Apple" page on this site.

I worked on the Instant Indexing Tier, a distributed system that indexes all new content on the World Wide Web within minutes. Developed features for removing obsolete content, spam blocking, taking inventory of signals imported from other indexing systems, and reindexing to take advantage of rapid signal changes. Refactored a core indexing component to achieve greater-than-expected code reuse across multiple Google websearch systems. Deployed binaries, monitored and resolved issues in close collaboration with our Site Reliability Engineers—I refer to this as "doing DevOps before realizing it had a name".

Pyfakefs is a fake file system module for Python that I started back in 2006, announcing it internally at Google in an early Testing on the Toilet episode. My motivation was less to isolate code under test from interacting with the filesystem than to avoid having a plethora of little test files littering the code base. The isolation aspect was a nice side-effect, however; and having the test "files" defined in the test case itself made it easier to write, read, and maintain large numbers of file system manipulation tests. (Our overloaded Perforce server was also happy to manage just one test file, too.)

Over time, a number of contributors expanded the module far beyond what I'd

intended; but thanks to rigorous review and unit testing, it both grew to

fulfill many people's needs and remained stable such that existing users

didn't break. Well, one specific breakage comes to mind, and that was

because the code's interface became more strict; the client only had to add

a proper "w" parameter to an open() call and all

was well. (Every other client opening a file for writing already had this

parameter.)

By the time I left, Pyfakefs was used in over 900 tests inside Google; the current GitHub page (linked above) reports over 2,000. It's also now available on the Python Package Index (PyPI), thanks to the current maintainers who stepped up after I abandoned the project upon leaving Google.

A while back, I added the with statement to the Python language

in version 2.6. Being primarily a C++ programmer at the time, and a firm

believer in the Resource Acquisition Is Initialization (RAII) idiom, the

idea of adding a mechanism to support the idiom in Python appealed to me.

With hints from Neal Norwitz, I produced the patch to add the

with statement, and Guido van Rossum cleaned it up and

submitted it. Then I had to write a bunch of Python code before Google ever

upgraded to Python 2.6.

In 2005 Google's code was largely untested, untestable, and lacking tools to fix this. I helped lead the successful effort to drive automated testing adoption, as illustrated in many of my presentations and quoted in Chapters 10 and 21 of The DevOps Handbook by Gene Kim et al. Specific initiatives included:

Jun 2007 – Jan 2009: Test Mercenaries. Joined, then managed a team of developers dedicated to improving design and testing techniques while embedded in other teams for months at a time.

Sep 2005 – Sep 2007: Testing Grouplet. Joined, then led a grassroots, 20%-time volunteer effort to improve testing techniques and tools. Drove initiatives such as the weekly Testing on the Toilet newsletter and Test Certified (a testing plan for individual teams). Partnered with tools teams, culminating in the Test Automation Platform, which tests every committed change within minutes.

Sep 2007 – Nov 2008: Fixit Grouplet. Recruited all of Google engineering for one-day, intensive sprints of code reform and tool adoption. Organized four company-wide Fixits, the last involving 100+ volunteers in 20+ offices in 13 countries. Led the grouplet to document its collective experience in order to make Fixits of all sizes easier to run and more effective.

Contributed to the backend of SrcFS, distributed file system used to scale Google's source repository. Worked on efforts to improve build speed and scale Perforce usage, and Testing Grouplet-driven tasks.

Worked on rendering of radar data and Vector Product Format (VPF) nautical chart data in support of shipboard navigation and port monitoring systems for the U.S. Coast guard. Designed and implemented a stable, efficient replacement module for a port monitoring system to render data from multiple radars to multiple X-based clients. Developed a spatial query engine, algorithms, and data structures to improve rendering of charts (90s to 5s) and add features.